Diptanil Chaudhuri and Rhema Ike and Hazhar Rahmani and Dylan A. Shell and Aaron T. Becker and Jason M. O'Kane

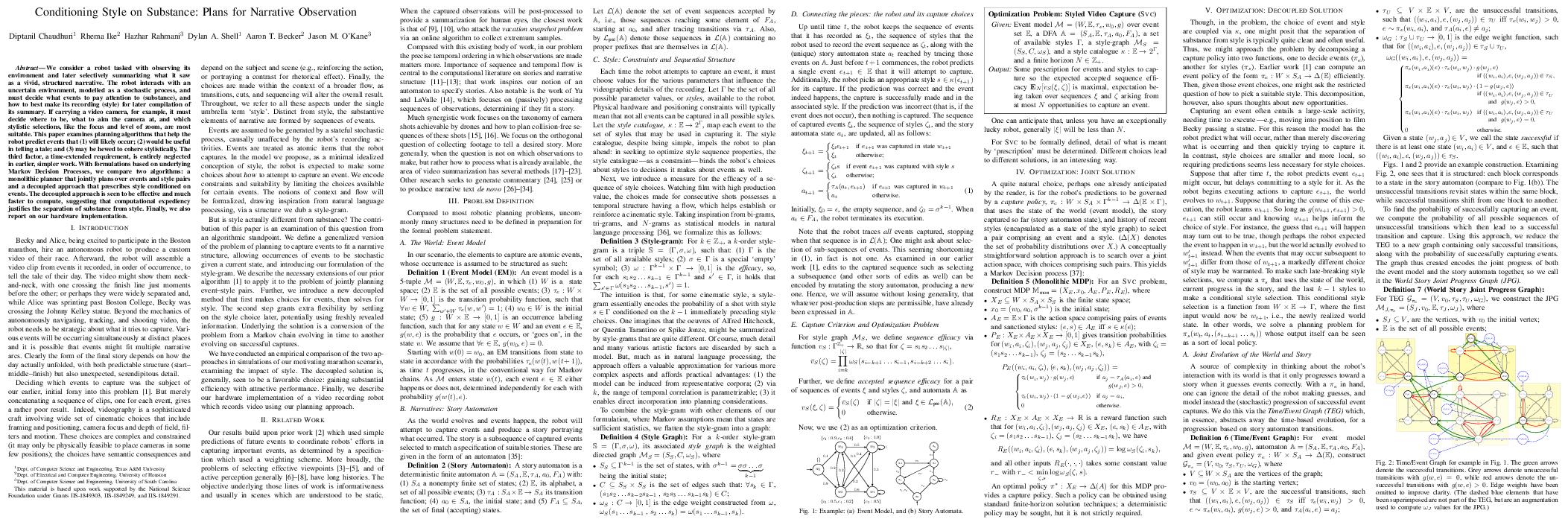

Diptanil Chaudhuri and Rhema Ike and Hazhar Rahmani and Dylan A. Shell and Aaron T. Becker and Jason M. O'KaneAbstract We consider a robot tasked with observing its environment and later selectively summarizing what it saw as a vivid, structured narrative. The robot interacts with an uncertain environment, modelled as a stochastic process, and must decide what events to pay attention to (substance), and how to best make its recording (style) for later compilation of its summary. If carrying a video camera, for example, it must decide where to be, what to aim the camera at, and which stylistic selections, like the focus and level of zoom, are most suitable. This paper examines planning algorithms that help the robot predict events that (1) will likely occur; (2) would be useful in telling a tale; and (3) may be hewed to cohere stylistically. The third factor, a time-extended requirement, is entirely neglected in earlier, simpler work. With formulations based on underlying Markov Decision Processes, we compare two algorithms: a monolithic planner that jointly plans over events and style pairs and a decoupled approach that prescribes style conditioned on events. The decoupled approach is seen to be effective and much faster to compute, suggesting that computational expediency justifies the separation of substance from style. Finally, we also report on our hardware implementation.

@inproceedings{ChaIke+21,

author = {Diptanil Chaudhuri and Rhema Ike and Hazhar Rahmani and

Dylan A. Shell and Aaron T. Becker and Jason M.

O'Kane},

booktitle = {Proc. IEEE International Conference on Robotics and

Automation},

title = {Conditioning style on substance: Plans for narrative

observation},

year = {2021}

}